Part two of the DevOps series. In the first installment, we explained what DevOps is, and why your company would benefit from incorporating it into your organization. In the second and final installment, we will cover the changes that DevOps brings to company culture and the best tools for DevOps success.

Agile development

What is the difference between DevOps and Agile? It is not always easy to recognize the boundaries between Agile and DevOps; one could also say that DevOps builds on agile methods. Agile tries to eliminate unnecessary and burdensome software development processes that might negatively influence the speed of the development and quality of the features. There are two popular agile methods, Scrum and Kanban, both of which try to focus developer teams on fast iterations by breaking down complex tasks and simplifying the process of development, testing, and product launch. While Scrum focuses on time management, Kanban offers more flexibility in the time to process tasks. DevOps even goes beyond Agile: it concentrates not just on higher efficiency in terms of feature requirements but also on system requirements. The system requirements include everything needed to treat the new features in a production system in such a way that the features become successful for the customer. Examples of system requirements are correct documentation, analytics, analysis of the dependent systems, etc.

DevOps is thus an expansion of agile methods. It is possible—but uch more difficult—to introduce DevOps without agile methods. The agile methods support DevOps with their iterative processes better than other software development methods, such as the waterfall model. Although success is possible with other methods, software development projects are typically more successful with agile methods.

Organizational changes

Corporate culture

The roots of DevOps lie in development and operations, but DevOps has progressed into a concept that surpasses IT. Effective DevOps mean that the entire company collaborates better. A successful introduction leads to a “circulus virtuosus”: DevOps enables higher productivity, resulting in more frequent implementations of product ideas by the DevOps team, which in turn leads to greater job satisfaction and improves the performance of the entire company. “Look at the cultural side,” said Andi Mann, chief technology advocate at Splunk. “Changing corporate culture is no small thing. It’s adopting shared values, systems, and thinking. It’s accepting the consequence of having smaller deliverables and iterating faster on them.”

Team structure

DevOps places the focus on a team rather than individual specialists. In traditional teams, specialization leads to the development of silos. In one team, for example, one person handles the database, one the hardware and another is the Windows admin, etc. But this specialization also means that several team members have to be available for every task and must synchronize their work. DevOps doesn’t mean that there are no more responsibilities, only that the roles often rotate. This gives everyone the opportunity to keep learning and expanding their skills. The cross-functional team is one of the success factors for DevOps. Teams develop a collaborative attitude that is concerned with eliminating problems instead of just assigning blame. The cross-functional team can have members from a range of disciplines, such as software developers, system administrators, quality assurance specialists, and product managers. Since the entire team is responsible for the work, it is no longer possible for developers to simply “hand-off” the code.

Communication and exchange

The communication and exchange of knowledge and information are important parts of DevOps. They enable efficient collaborations in a team. For that reason, metrics about the performance and processes should be made available to all interested parties early on. An exchange is also beneficial outside of the company, whether this involves participating in open source projects, conferences, or writing blog posts about the projects. The team can be proud of its work and also share this with others. A dialog with the outside also holds further opportunities: it is a good way to get feedback and draws in talented new employees on the side.

TOOLS & METHODS

Monitoring & Analytics

At DevOps, automation is often in the spotlight. But the monitoring and analysis of the applications in various lifecycle phases are at least equally important. The metrics aren’t just important for production; they also enable the revision or improvement of features and/or business plans. The continuous feedback loop is an essential part of DevOps; it allows companies to be more agile and better address customer needs. New Relic and Datadog are two of the better-known metrics-based tools. But even good tools won’t replace a sophisticated monitoring approach. It is important to perform monitoring, not for the sake of monitoring but to consider exactly which metrics are valuable. When a file-sharing service captures user engagement, for example, it is important to see whether users upload files. For an online dating platform, in turn, the number of registered and logged-in users and their interactions is a better indication of success or failure. Each company has to make its own decision about which metrics are important for it. The main goals of monitoring are usually milestones in the development, weaknesses, deployments, application protocols, server health, and activity monitoring.

Miniclip, a manufacturer of digital and mobile game applications, emphasizes that not just its products should be fun but also the work on their development. However, a lack of implementable information about the performance of their code was anything but a pleasure for the Miniclip developers. “The monitoring software we had only showed us server information,” reported Gary Rutland from Miniclip. “We couldn’t really understand how we could use the information to identify problems in our applications.” During the introduction of DevOps, a more robust application performance monitoring tool was implemented, among other things, which enables Miniclip to quickly identify problems with the capacity or features. “Now we’re able to rewrite code rather than just throwing more infrastructure at a problem, which is costly and time-consuming,” said Dave Shanker from Miniclip.

Infrastructure as Code

Infrastructure as Code (IaC) is a particular IT infrastructure that is automatically administered and provided via code instead of manual processes. Live source code replaces static documentation. Coded with Ansible, an IT management and configuration tool, IaC can install a MySQL server, for example, verify whether MySQL is executed correctly, generate a user account and password, set up a new database and remove unnecessary databases—all through code. The introduction of Infrastructure as Code means new tools and work methods; for example, it allows the application of software development practices such as code reviews, continuous integration, and unit testing. The infrastructure not only becomes more reliable but is now also open to other team members who traditionally wouldn’t deal with the infrastructure but can also manage systems.

Infrastructure as Code enables best practices in DevOps, since it means that developers are now more involved in the definition of the configuration, while operations participate in the development process earlier. Infrastructure automation tools are often part of the DevOps processes and reduce the complexity that the manual configuration entailed. Previously, every developer or support employee would dread the moment when they had to reconfigure a server. But this is no longer a problem with IaC: in today’s age of the cloud, it only takes seconds to configure a new server and doesn’t require anything aside from an Internet connection and a credit card.

For the operations staff, Infrastructure as Code means new tools and new work methods. This approach is more familiar to those working in development, but traditional system administrators have to get used to this first. However, the point is not to have every system administrator become an experienced programmer but rather to implement a few software processes and practices.

At Walt Disney Company, for example, the introduction of configuration management tools—in this case Puppet Enterprise and Chef—as well as cloud hosting and infrastructure automation greatly changed how the teams perceived infrastructure management. The perspective changed from “How do I manage scaling?” to “How can I see infrastructure as code?” Now infrastructure was no longer a series of machines that you log into but rather code that could be written to deliver scaling, agility and solutions. Even better: this infrastructure code could be combined with application code, and everything could be controlled from a single platform.

Infrastructure as Code tools

At its core, Infrastructure as Code represents a change from manual processes to automated procedures. The goal is to bring the company’s development and operations teams together and make the operation of the company’s IT automatable and programmable with a jointly designed infrastructure and jointly designed procedures that greatly resemble the processes to develop application code. There are a number of tools that support IaC. Developers can also deal with IaC because they can simply write infrastructure code. Descriptive languages used by tools such as Ansible are easy to learn.

Ansible and alternatives

Ansible uses a very simple language, YAML. It positions itself as a more lightweight and easy alternative to other tools and has a series of very well-known customers, including Twitter, Spotify and Evernote, most of which use the enterprise variant Ansible Tower. Ansible dispenses with the customary client-master model and administers network computers via SSH, which simplifies roll-outs.

Chef

This is based on Ruby and is offered as a community and enterprise variant as well as on-premise and on-demand. Admins first have to set up the Chef Development kit before they can install the chief agents at the nodes via SSH. Ruby is used as a Domain Specific Language (DSL), which means that no additional formatting language is needed.

Puppet

This is the top contender among modern IT automation tools and has been available on the market since 2005. Puppet offers the entire automation spectrum, with an extensive selection of modules, GUI interfaces and plug-ins. It is based on Ruby and uses its own language, which must be learned first. Puppet is available as an open source and an enterprise version.

SaltStack

This is not based on Ruby but on Python and, together with Ansible, it is among the leading automation and orchestration solutions currently offered on the market. It encompasses an entire series of useful commandos and modules to install and configure software packets.

Containers

Containers consist of a complete runtime environment: of one application including all dependencies, libraries and other binary and configuration files, everything bundled in one package. Containers unify the environment and tools. They are one of the most important tools in the DevOps workflow, since they make it possible to redefine how applications are deployed and how the cloud infrastructure is used. They make it easy to package the code and push it into the release pipeline, which streamlines the DevOps workflow.

Before BBC News introduced containers, it took up to 30 minutes just to plan jobs and then another 30 minutes to complete the job. “Something broke during the company Christmas party. We had two people huddled in a corner with a computer, their food and drinks just trying to fix it and get the job scheduled to run again,” said Simon Thulbourn from BBC News. The jobs were handled sequentially, one after the other, which meant that planning problems and errors were a matter of course and a lot of development time was lost. Thanks to the introduction of Docker containers, BBC News managed to eliminate the 30 minutes for the planning, and it can now complete several jobs simultaneously.

What is the difference between virtualization and containers?

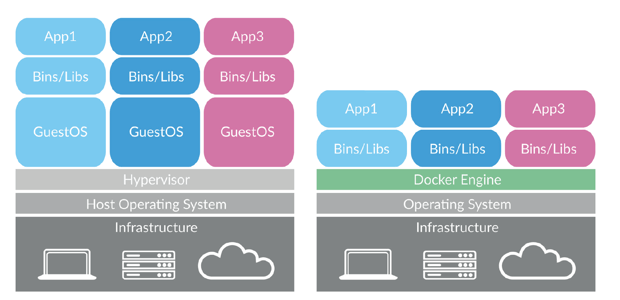

Virtual machines

When the sizes of server computing performances and capacities increased, virtual machines were developed where software is executed on physical servers to imitate a particular hardware system. A hypervisor or virtual machine monitor sits between the OS and hardware. This is software, firmware or hardware that creates and executes virtual machines, and it is needed for the virtualization of the server. A unique operating system runs in each virtual machine. It is possible for virtual machines with different operating systems to run on the same physical server. Each virtual machine has its own binary program or libraries and applications, and their size can comprise many gigabytes. The advantages of virtual machines compared to containers are that the applications can be consolidated into a single system. Thanks to a reduced footprint, faster server availability and improved emergency recovery, this will result in savings.

Containers

Containers sit on a physical server and its operating system. In contrast to virtual machines, each container shares its host’s operating system and usually its binary programs and libraries as well. These shared components are read-only. Containers are extraordinarily “light”— their size is mere megabytes, and they start in fractions of a second. The advantages of containers are their velocity and lightness. A server can accommodate more containers than virtual machines. They accelerate the development and testing with the fast packaging of applications and their dependencies while reducing the management overhead: sharing the operating system means that there are less bugs to fix and patches to install, among other things. Containers are also ultra-consistent and usually free and open source.

Figure 1: Virtual machines (left) and containers (right)

Figure 1: Virtual machines (left) and containers (right)

Docker and alternatives

Many people consider Docker as a synonym for containers. It helps DevOps teams to provide software more efficiently by solving many problems of virtualization. The normal software delivery process looks like this:

- Design app

- Write the code

- Build the code in a test environment

- Test it

- Package and deliver the tested app

The only step that changes substantially when Docker is used during the development is the 4th: Docker allows developers to pack the application in a container that is a software-defined environment, which is easily portable and abstracts from the host system. One of the advantages of the application delivery as Docker instances is that the application only has to be built once. There is consistency between test environments and production environments. It enables greater modularity, which pays off particularly in microservices in which complex applications are divided into separate units. For example, the database can run in one container and the front-end part of the application in a separate container. This approach makes the application modular and reduces the complexity of management and updates since any problems or changes in one part of the application do not require the entire revision of the whole app.

Open Container Initiative (OCI)

OCI was launched to promote open standards. Docker, Google, Amazon, Facebook, IBM, and Red Hat support the Open Container Initiative.

Kubernetes

Kubernetes is the open-source container management platform of Google. It can run on most cloud providers, such as AWS, and Google Cloud.

Figure 2: Selected DevOps tools

FIRST STEPS

DevOps is not a patented solution for all problems in software development—its introduction does not drastically increase the velocity and reduce errors magically from one day to the next. DevOps changes the entire culture of the company, which means it is better to introduce it step by step. Start with a few valuable projects that are not critical to your mission. This lets the company see the clear advantages of DevOps. Once DevOps has succeeded in smaller projects, you can introduce it in higher-ranking tasks. If you are not practicing DevOps at the moment, the first step you should suggest is that employees in the operations team spend a few days with the development team and participate in their stand-ups or sprint retrospectives. Invite developers to take part in meetings about system failures and operation planning so that they get to know the most important problems of the business. Publish development progress reports for the entire company, and invite all members to read them.

Always keep in mind that DevOps is primarily about changing the culture to establish more responsible organizations that can adapt quickly. It is also about fully taking advantage of the modern technologies to replace manual, time- and error-intensive activities with automation. DevOps is also about exchanging and sharing information, thus changing not only the results of your work but also how you work. Use the opportunity to be part of this development, and advance your company with DevOps.